There are arguments going on in the market, in vendor negotiations and even in my house about the trustworthiness of the code AI produces. It's a fair concern. But here's the question nobody asks back: can you actually read what your current tools are doing? Not what the icons say. What they're actually doing under the hood. Tools like Alteryx, Microsoft Fabric, SPSS and hell, even Tableau Prep, have built their entire pitch around "your people don't need to learn code, we got you." They sell you on easy to read and easy to audit. But are they? Today we are going to look at why these SaaS black boxes might be the less transparent option, and why open source code, even AI-generated code, gives you more visibility, more flexibility, and more control than the tools you are already paying five figures for. And yes, there's a way to get there without making your team learn Python.

You Can't Read Theirs Either

I was an Alteryx consultant and advocate for close to a decade. I loved the product. But even as someone who knew it deeply, I watched it stall. Alteryx got trapped in a position where it needed to become more open source to stay relevant, but couldn't open up too much without undermining the proprietary model that justified the price tag.

You need to give it to them, their marketing to finance and accounting teams has been phenomenal. They found a niche that isn't overly technical, requires a lot of automation and has access to internal budget. But how long can that hold up against AI? Alteryx can keep telling people that if you can't read Python, you can't trust AI outputs. But the same argument works in reverse. And understanding what Alteryx is actually doing requires a specialist and a $4,950 per user per year license, with enterprise deployments easily exceeding $1M annually.

The same tension is playing out with Microsoft Fabric. Coalesce's guide to Fabric data transformation (November 2025) documented what engineering teams keep running into: Dataflow Gen2 performance becomes unpredictable at scale, version control remains a challenge, and lack of visibility creates anxiety around changes. One healthcare analytics consultant discovered Fabric silently dropping hundreds of rows during transformations due to an undocumented metadata refresh delay. The fix required manually calling a REST API. That's the kind of thing that doesn't show up in a drag-and-drop interface but absolutely shows up in a financial audit.

This isn't a single-vendor complaint. It's a category problem. Every GUI-based ETL tool shares a fundamental design choice: hide the logic to make the interface feel simple. That's great for onboarding. It's terrible for auditing. Most workflows in these platforms don't stay clean for long. They grow into a tangle that you eventually hire someone to untangle. So how do you start building trustworthy analytics with AI when you don't really understand Python or other open source languages?

Code Is the Audit Trail

With drag-and-drop tools, the audit trail is the visual workflow. But do you actually know everything those nodes are doing? If there is a bug inside a node, or someone configured a tool in a way that quietly changes the output, what is there to tell you that? In Fabric, audit logs only recently moved beyond preview and still require T-SQL queries to access. In Alteryx, users have historically resorted to screenshots and unofficial third-party macros to produce documentation for their audit teams. These are the gaps that make it hard to defend your position when something goes sideways in an audit. None of these tools are going to bail you out.

Even though coding sounds scary, this is where Python and Git actually give you more insight and traceability. A Python script is searchable. You can diff two versions to see exactly what changed. Every edit is tracked in Git with a record of who changed it, when, and why. You can open it in any text editor on earth without a license.

The Audit Analytics community, which serves professional auditors, makes this case directly. They note that Git provides audit traceability for Python analyses and that scripts can be shared with colleagues and reviewers without needing identical software licenses. A well-structured Python script, they argue, reads almost like pseudocode: clear, traceable, and built for collaboration within an audit team.

A proprietary workflow gives you none of that. Want your external auditor to verify an Alteryx workflow? They need a license. Want to search across all your Fabric transformations for a specific logic pattern? Not without digging through each one manually. Want to compare two versions of a pipeline to see what someone changed last quarter? Good luck doing that with either platform.

Yes, there will be some training involved. But I've also watched users sit through five hours of Alteryx training and still struggle to find the join tool. And Fabric requires significant time investment in training and adapting existing workflows, with Microsoft's own tooling complexity leading to longer adoption periods. The learning curve argument cuts both ways. The difference is that when you invest in code literacy, you get transparency that actually holds up under scrutiny. When you invest in a SaaS license, you get a visual layer that feels transparent but isn't.

So how do you start building that trust with open source code? We built something that might help.

Ophi: The Verification Layer

The question I keep hearing in my daily role is some version of "how do I get started using more agentic tools and less old school drag-and-drop?" And every time, the barrier is the same: "I don't know Python."

But here's the thing. With Claude Code, Cursor and the wave of AI coding tools available right now, you can build solutions without writing code from scratch. This past weekend I was experimenting with a new Claude Code plugin and thought: what if I could make Python more transparent to Alteryx, Fabric and Excel users? Ten minutes later I had a working app. That's when Ophi was born.

A lot of people have already started hacking at converting proprietary workflows into Python, and some of those projects are genuinely clever. But I thought about the problem from the other direction. Say you're non-technical and you get a script from ChatGPT or Claude. It runs. It produces output. But how do you trust what it did? That's what Ophi solves. You take whatever code your AI chatbot produced and Ophi translates each line into its Alteryx or Excel equivalent, so you can verify AI-generated code in the language you already think in. Not a summary. A line-by-line explanation that helps you relate each operation to the analysis you already understand.

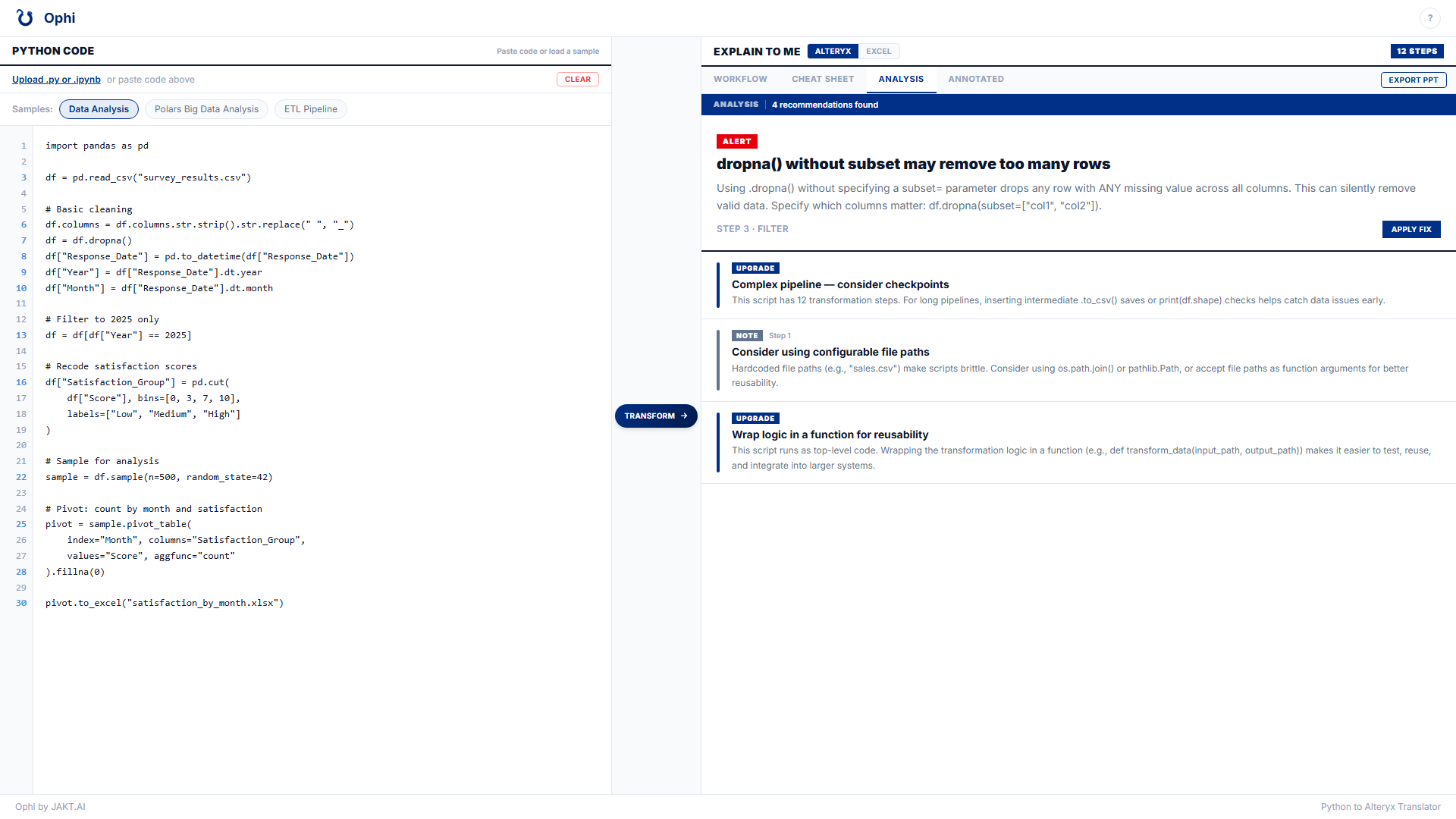

Ophi's Analysis tab catches issues your drag-and-drop tool would silently ignore — like a dropna() that removes more rows than you intended — and offers to fix it for you.

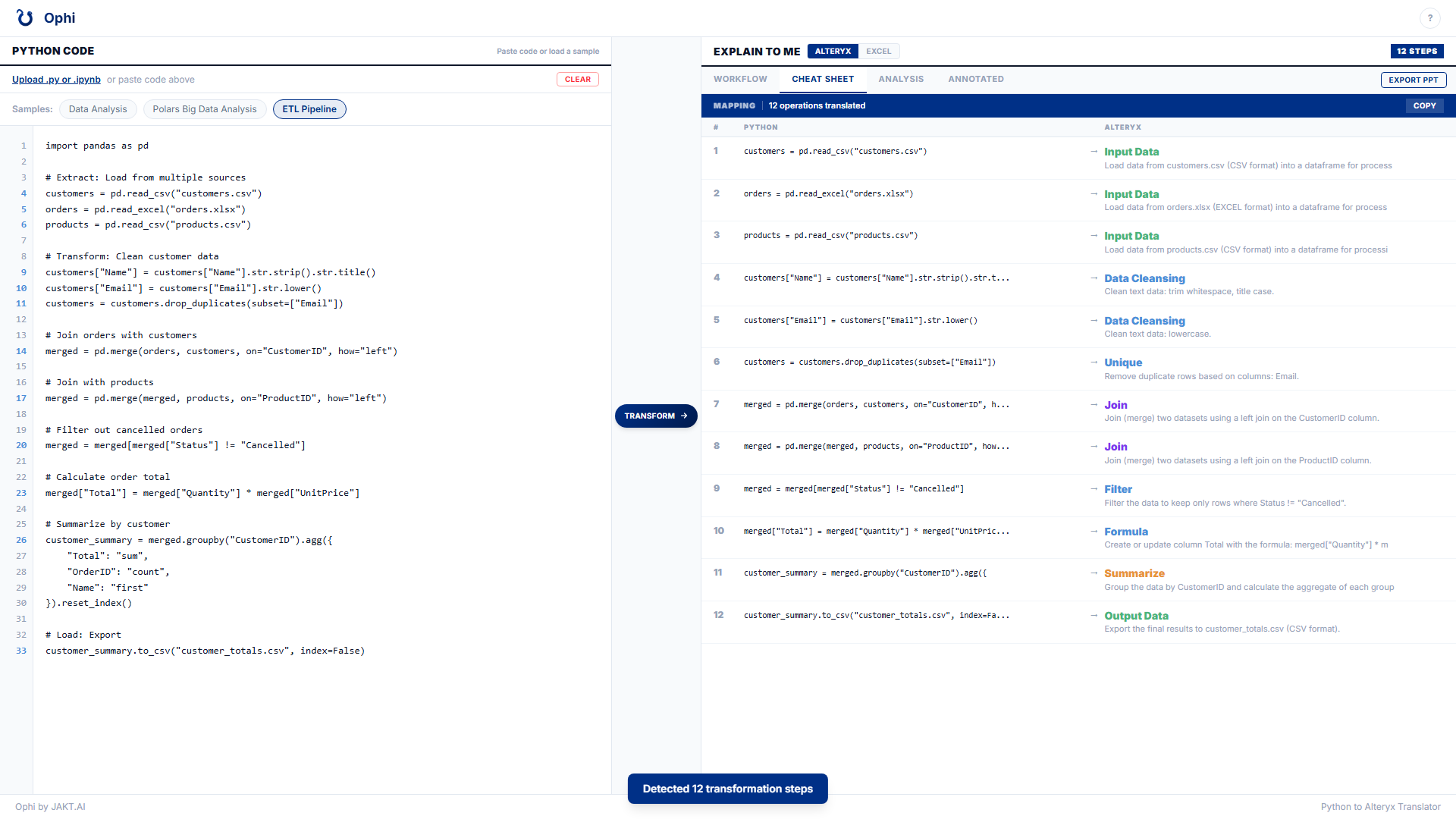

Ophi's Cheat Sheet maps every Python operation to its Alteryx equivalent, line by line — so you can verify what the code is doing in the language you already know.

This doesn't replace learning Python. But it makes the transition approachable. It takes Python out of the blocker column and into something you can verify, learn from, and build confidence with. And it's free. The world of free tools like this is starting to evolve what companies are spending millions to keep alive. Check out the source code on GitHub.

The data supports why this matters. Stack Overflow's 2025 developer survey found that 84% of developers use AI tools but only 29% trust them. And those are developers who can actually read the code. For non-technical users, the gap is even wider. Sonar's 2026 State of Code survey of over 1,100 developers found that 96% don't fully trust AI-generated code is functionally correct, yet only 48% verify it before committing. The bottleneck has shifted from writing code to verifying it. That verification layer is exactly what was missing. It's not missing anymore.

The Economics Won't Wait For You

I'll be fair. Python isn't perfect. Libraries update and sometimes break your code. Dependencies shift. You need someone paying attention to maintenance. But when a Python library breaks, you can read the changelog, pin the version, and fix it yourself. When your vendor pushes an update that breaks your pipeline, you open a support ticket and wait. SaaS tools have the same maintenance problem. The difference is that one of them lets you see what broke and the other one doesn't.

The economics have shifted and they aren't shifting back. AI inference costs have dropped roughly 100x in two years. The no-code AI market went from $5.84 billion in 2024 to a projected $36.50 billion by 2032. Meanwhile your Alteryx license didn't get cheaper and your Fabric capacity tier didn't scale down. When something breaks in your pipeline today, you can stand up a Python solution for free in the time it takes your vendor to acknowledge the support ticket. Showing your work changes minds. And the tools to show your work have never been cheaper.

"I don't understand Python" is a real barrier. But it's a shrinking one, and the cost of not crossing it is growing. Amazon CTO Werner Vogels described the shift at AWS re:Invent 2025: when machines write the code, you have to rebuild comprehension during review. Tools like Ophi that can be built in an hour for less than a dollar in tokens exist to close that gap. Start with one workflow. Let AI generate the Python. Run it through Ophi. If the explanation matches what you expected, you just verified an AI output for free that would have cost you thousands inside a black box. The alternative is continuing to pay millions for platforms that can't show you what they did.

Subscribe to Syllabi for weekly insights from the classroom to the boardroom. New articles every Monday at 9am.

Follow JAKT.AI on LinkedIn for the conversation.