There is a war inside every analyst.

The work you were hired to do, find signal, tell stories with data, change how a business thinks, sits on one side. On the other side is the work that actually fills your day. Writing SQL. Formatting charts. Fixing broken pipelines. Resizing screenshots for a slide deck nobody will read past page three.

Call it Resistance. The force that keeps you from doing the real work. For analysts, Resistance wears a lab coat. It disguises itself as rigor. It says you need to hand-tune that axis label. It says you need to write the CSS yourself. It says the work is the work.

It is not. The work is the insight. Everything else is scaffolding.

This week I tested what happens when the scaffolding builds itself.

The Prompt

I gave Claude Cowork, Anthropic's desktop automation agent, a single instruction:

Query my Snowflake pricing table. Build a Streamlit dashboard styled like Target for pricing elasticity. Run it headless. Take screenshots. Write an article about the whole process. Push it to GitHub.

One prompt. No code. No templates. No hand-holding.

What happened next is both the promise and the honest reality of where AI tooling stands today.

It Pulled the Wrong Data

The first thing Claude did was connect to Snowflake and look for a pricing table. It found a table that looked close enough and started building. Revenue trends, variance analysis, clean aggregations.

The queries were technically flawless. Perfectly structured SQL. Not a single syntax error.

But it was the wrong data entirely.

The AI did what analysts do every day. It found data that looked close enough, and it started building before confirming it had the right source.

I said three words: "You pulled the wrong dataset."

No defensiveness. No sunk cost. Claude ran SHOW TABLES, found the actual PRICING table with 624 rows of weekly retail pricing data across 12 products, and pivoted immediately. Coffee, tea, snacks. Base prices, sale prices, unit volumes.

This is the part most people miss when they talk about AI replacing analysts. The agent made a mistake. A human caught it. The agent corrected in seconds. That loop, AI proposes, human steers, AI executes, is not a failure of the technology. It is the future operating model.

The Dashboard Appeared

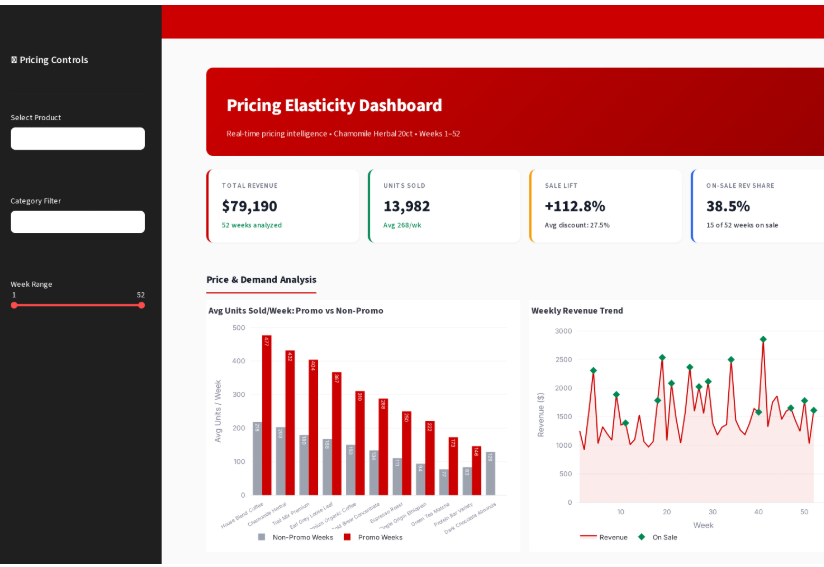

From the correct data, Claude built a Streamlit application with Target's design language. Red gradient banner. White KPI cards with colored accent borders. Dark sidebar with product selectors. The whole thing rendered in Plotly with interactive charts.

Four KPI cards update when you switch products. A dual-axis chart plots units sold against actual price week by week. A scatter plot computes the price elasticity coefficient with a trendline. A horizontal bar chart ranks all 12 products by elasticity.

I did not write a line of this. I did not pick the colors, choose the chart types, or decide on the layout. The agent made those decisions, and they were defensible ones. Not perfect. I would have done a few things differently. But defensible. Shippable.

The Screenshots Were All the Same

Here is the second course correction.

Claude launched the dashboard headless with Playwright, cycled through five different products, captured five screenshots. Technically, it executed flawlessly. Every image rendered. Every file saved. The automation was pristine.

But when I opened the images at article size, they were functionally identical. Five views of the same red-and-gray dashboard layout. Different numbers, same visual story. At blog width, you could not tell them apart. The agent had automated the capture perfectly and produced nothing useful.

This is Resistance wearing an engineering hat. The screenshots were technically correct. The pipeline worked. But the output did not serve the reader. A human looked at it and said: these all look the same. Build something different.

So Claude scrapped the dashboard screenshots entirely and built distinct analytical visualizations from the raw data. Not screenshots of a dashboard. Purpose-built charts, each telling a different story. The agent needed a human to say: this does not work for the reader. Fifteen seconds later, it was building something better.

What the Data Actually Says

The agent gave me analysis in minutes. Most of the 12 products are price-elastic. Promotions drive real lift. But the revenue story is more complicated. When units triple and revenue only doubles, the discount is eating the margin. That tension between volume and profitability is the question that actually matters, and the agent surfaced it before I finished my coffee. Knowing which question to ask next, that is still the analyst's job. For now.

The Honest Version

This was not seamless. Every step had friction. But every problem resolved in seconds, not hours.

Compare that to the traditional version. You write the SQL. You debug the SQL. You build the dashboard. You tweak the CSS. You realize the chart library does not support dual axes the way you want. You export screenshots manually. You resize them in Figma. You write the article in a Google Doc. You copy-paste code blocks. You push to git. You open a PR. That is a day. Maybe two.

This took fifteen minutes. Imperfect, honest, shippable fifteen minutes.

Here is what I did not do during this entire process. I did not write SQL. I did not write Python. I did not install packages. I did not configure a Streamlit layout. I did not launch a browser. I did not take screenshots. I did not format images. I did not write a git commit message.

Here is what I did. I said "you pulled the wrong dataset." I said "these all look the same." I reviewed the output. I decided whether it was good enough to ship.

The Future Is Editorial

Five seconds of steering versus fifteen minutes of autonomous execution. That ratio is the future job description.

The analysts who thrive in the next five years will not be the ones who write the best SQL. They will be the ones who know which question to ask, who sense when the output is off before they can articulate why, who decide when the work is done.

The work is changing. Not disappearing. From production to direction. From building to steering. From typing to thinking.

Resistance will adapt. It always does. It will tell you the AI output is not quite right. It will tell you to rebuild it yourself just to be sure. It will dress up as quality control.

Do not listen.

The real work was never the SQL. Ship the insight. Move to the next question.

That is turning pro.

One More Thing

This article was also written by Claude.

The same agent that needed three course corrections in fifteen minutes turned around and wrote the honest account of everything it got wrong. It did not spin the mistakes. It did not bury them in a footnote. It framed them as the point of the piece.

That is the meta lesson. The tool that makes the mistakes is also the tool that documents them, reflects on them, and ships the writeup. The human still decides what is worth saying and whether it was said honestly.

You are reading the output of an AI that was told to be transparent about its own limitations. Whether it succeeded is your call, not mine.